How B2B Sales Did Not Teach Me About CloudFront Functions

You’ve probably seen the posts:

- “How B2B sales helped me run a marathon”

- “How cold calling made me a better engineer”

This isn’t that. Unfortunately.

Redirects, DNS, and Terraform

This one started simple: I wanted to redirect the apex domain (vakintosh.com) to the www subdomain.

flowchart TD

Start([Start]) --> A[User types vakintosh.com]

A --> B[/Browser sends HTTP request/]

B --> C[DNS resolves apex domain to CloudFront edge node]

C --> D[CloudFront Function fires on Viewer Request event]

D --> E{Is host vakintosh.com?}

E -->|Yes| F[Return 301 Redirect Location: www.vakintosh.com]

F --> G[/301 Response sent to browser/]

G --> H[Browser follows redirect to www.vakintosh.com]

H --> I[CloudFront forwards request to S3 origin]

E -->|No| J[Rewrite URI e.g. /blog → /blog/index.html]

J --> I

I --> K[/Static content served from S3/]

K --> Finish([Finish])

- The user’s browser sends a request to

vakintosh.com, which DNS resolves to a CloudFront edge node. - A CloudFront Function fires on the Viewer Request event, before the request ever reaches the S3 origin.

- If the host is the apex domain, the function returns a 301 redirect to

www.vakintosh.comdirectly from the edge. - If the host is already

www, the function rewrites pretty URLs (e.g./blog→/blog/index.html) before forwarding to S3.

I figured I’d just do it manually in the Porkbun DNS console. Bad idea.

Porkbun doesn’t warn you that editing an ALIAS record replaces the original record entirely, which is exactly what Terraform had created and was tracking. So now I had:

- A new manual record in DNS

- A Terraform state file that still thought the old one existed

- No way to reconcile the two without Terraform throwing a fit

Here’s what I had to do:

flowchart TD

Start([Start]) --> A[/"aws s3 cp s3://bucket/terraform.tfstate . Download state from S3"/]

A --> B[Comment out backend block in provider.tf]

B --> C[terraform init reinitialize against local state file]

C --> D["terraform state rm porkbun_dns_record.root_domain_alias"]

D --> E["terraform import porkbun_dns_record.root_domain_alias vakintosh.com"]

E --> F[Re-enable backend block in provider.tf]

F --> G[terraform init prompts to copy local state → S3]

G --> H[terraform plan verify no errors]

H --> Finish([Finish])

The backend block in provider.tf had to be commented out because the changes to the state file were being made locally. If the backend was still enabled, Terraform would try to sync with S3 first and throw an error because the state there was out of date.

After all that, I could finally run terraform plan to confirm the changes without errors then upload the fixed state file back to S3.

CloudFront Functions

I chose not to use the S3 website endpoint, even though it’s simpler, because that requires enabling public access via ACLs, which breaks the “least privilege” model.

Instead, I went full CloudFront:

- Created a CloudFront Function named

redirectthat handles 301s from the apex domain to thewwwsubdomain at the edge. - Also needed to rewrite pretty URLs like

/aboutor/blog/to Hugo’s static files (e.g./blog/index.html), since CloudFront doesn’t natively support directory-style routing. - Used Terraform’s

templatefile()to injectvar.domain_namedirectly into the function code. - Attached the function to the Viewer Request event of my existing CloudFront distribution.

- Removed the need for:

- A separate redirect S3 bucket.

- A second CloudFront distribution.

- DNS trickery with Porkbun aliases.

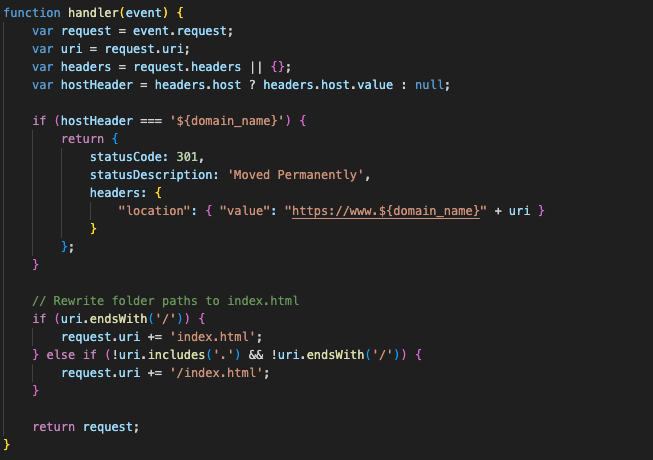

CloudFront Function (simplified):

CloudFront Functions are fast, but they come with caveats: limited size, limited logs, and no console.log for debugging. But this little redirect + rewrite combo meant I could use CloudFront without Porkbun alias records, without leaking my S3 bucket to the internet, and without Hugo breaking every time someone wanted to access /blog or /about.

And that was enough.

Security Headers and CSP

I also took this opportunity to tighten security headers by creating a CloudFront Response Headers Policy called secure-headers-with-csp.

Included headers:

Strict-Transport-Security: 2-year max-age, includeSubDomains, preloadX-Frame-Options:DENYX-Content-Type-Options:nosniffReferrer-Policy:strict-origin-when-cross-originX-XSS-Protection:1; mode=blockContent-Security-Policy: locked-down as hell

GitHub Actions

Since this was part of my Terraform GitHub Actions workflow, I took the chance to clean it up.

terraform-applynow requires manual approval viaworkflow_dispatch- I would’ve preferred GitHub Environments with

required reviewers, but they’re only available on org repos (not my private one).

- I would’ve preferred GitHub Environments with

- Enabled CloudWatch Logs delivery for CloudFront using AWS-managed delivery sources/destinations.

To support CloudWatch logs delivery, I had to give CloudFront permission to manage its own logging infra. That meant writing an inline IAM policy attached to my deployment role.

Policy highlights:

- Grants permission to manage a named delivery source.

- Grants permission to manage a named delivery destination.

- Enables tagging of both resources.

- Grants CloudFront permission to log to that destination.

- Adds global permissions for

logs:delivery:*actions.

Finally, a Website

I finally deployed my website to S3 with Hugo. I’m using a custom theme called adritian-free-hugo-theme. I updated the content and replaced all the stock pages with my own. It’s minimal, clean, and doesn’t look like a template. That was the goal.

Deployment is currently handled via CLI using:

hugo deploy --target production --invalidate --config config.toml,config.production.toml

I also started building a separate GitHub Actions workflow for deployment, but I hit a snag:

Could not assume role with user credentials: User: arn:aws:sts::***:assumed-role/GitHubAction-AssumeRoleWithAction/GitHub_to_AWS_via_FederatedOIDC is not authorized to perform: sts:TagSession on resource: ***

Apparently, sts:TagSession is now required for OIDC-based role assumption if you’re passing tags, and GitHub’s OIDC provider does that automatically. I’ll need to update the trust policy or attach an additional permission to my GitHub federated principal to fix this. Another rabbit hole for tomorrow.

Summary

- Hugo static sites can play nicely with strict CSP rules, as long as I keep inline scripts and styles to an absolute minimum (or serve them externally)

- If I do need inline scripts later, I’ll need to use

nonce-orsha256-hashes, but I’d rather not go down that road unless I have to - Never manually edit DNS for Terraform-managed records unless I enjoy pain

- Don’t forget to fix the GitHub Actions role permissions before you forget what

sts:TagSessioneven is

If you’re reading this because you’re stuck somewhere in the Terraform + S3 + CloudFront + GitHub + DNS mess, just know: it does work. Eventually.